2018/07 作者:ihunter 0 次 0

一、介绍

二、安装JDK

三、安装Elasticsearch

四、安装Logstash

五、安装Kibana

六、Kibana简单使用

系统环境:CentOS Linux release 7.4.1708 (Core)

当前问题状况

开发人员不能登录线上服务器查看详细日志。

各个系统都有日志,日志数据分散难以查找。

日志数据量大,查询速度慢,或者数据不够实时。

一、介绍

1、组成

ELK由Elasticsearch、Logstash和Kibana三部分组件组成;

Elasticsearch是个开源分布式搜索引擎,它的特点有:分布式,零配置,自动发现,索引自动分片,索引副本机制,restful风格接口,多数据源,自动搜索负载等。

Logstash是一个完全开源的工具,它可以对你的日志进行收集、分析,并将其存储供以后使用

kibana 是一个开源和免费的工具,它可以为 Logstash 和 ElasticSearch 提供的日志分析友好的 Web 界面,可以帮助您汇总、分析和搜索重要数据日志。

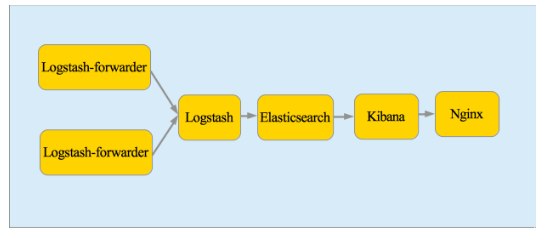

2、四大组件

Logstash: logstash server端用来搜集日志;

Elasticsearch: 存储各类日志;

Kibana: web化接口用作查寻和可视化日志;

Logstash Forwarder: logstash client端用来通过lumberjack 网络协议发送日志到logstash server;

3、工作流程

在需要收集日志的所有服务上部署logstash,作为logstash agent(logstash shipper)用于监控并过滤收集日志,将过滤后的内容发送到Redis,然后logstash indexer将日志收集在一起交给全文搜索服务ElasticSearch,可以用ElasticSearch进行自定义搜索通过Kibana 来结合自定义搜索进行页面展示。

下面是在两台节点上都安装一下环境。

二、安装JDK

配置阿里源:wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

yum clean all

yum makecache

Logstash的运行依赖于Java运行环境,Elasticsearch 要求至少 Java 7。

[root@controller ~]# yum install java-1.8.0-openjdk -y

[root@controller ~]# java -version

openjdk version "1.8.0_151"

OpenJDK Runtime Environment (build 1.8.0_151-b12)

OpenJDK 64-Bit Server VM (build 25.151-b12, mixed mode)

1、关闭防火墙

systemctl stop firewalld.service #停止firewall

systemctl disable firewalld.service #禁止firewall开机启动

2、关闭selinux

sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config

setenforce 0

三、安装Elasticsearch

基础环境安装(elk-node1和elk-node2同时操作)

1)下载并安装GPG Key

[root@elk-node1 ~]# rpm --import https://packages.elastic.co/GPG-KEY-elasticsearch

2)添加yum仓库

[root@elk-node1 ~]# vim /etc/yum.repos.d/elasticsearch.repo

[elasticsearch-2.x]

name=Elasticsearch repository for 2.x packages

baseurl=http://packages.elastic.co/elasticsearch/2.x/centos

gpgcheck=1

gpgkey=http://packages.elastic.co/GPG-KEY-elasticsearch

enabled=1

3)安装elasticsearch

[root@elk-node1 ~]# yum install -y elasticsearch

4)添加自启动

chkconfig --add elasticsearch

5)启动命令

systemctl daemon-reload

systemctl enable elasticsearch.service

6)修改配置

mkdir -p /data/es-data

vi /etc/elasticsearch/elasticsearch.yml

cluster.name: hejianlai //集群名称 node.name: elk-node1 //节点名称 path.data: /data/es-data //数据存放目录 path.logs: /var/log/elasticsearch/ //日志存放目录 bootstrap.memory_lock: true //打开内存 network.host: 0.0.0.0 //监听网络 http.port: 9200 //端口 discovery.zen.ping.multicast.enabled: false //改为单播 discovery.zen.ping.unicast.hosts: ["192.168.247.135", "192.168.247.133"]

service elasticsearch start # centos6

[root@elk-node1 elasticsearch]# systemctl start elasticsearch

You have new mail in /var/spool/mail/root

[root@elk-node1 elasticsearch]# systemctl status elasticsearch

● elasticsearch.service - Elasticsearch

Loaded: loaded (/usr/lib/systemd/system/elasticsearch.service; disabled; vendor preset: disabled)

Active: failed (Result: exit-code) since Thu 2018-07-12 22:00:47 CST; 9s ago

Docs: http://www.elastic.co

Process: 22333 ExecStart=/usr/share/elasticsearch/bin/elasticsearch -Des.pidfile=${PID_DIR}/elasticsearch.pid -Des.default.path.home=${ES_HOME} -Des.default.path.logs=${LOG_DIR} -Des.default.path.data=${DATA_DIR} -Des.default.path.conf=${CONF_DIR} (code=exited, status=1/FAILURE)

Process: 22331 ExecStartPre=/usr/share/elasticsearch/bin/elasticsearch-systemd-pre-exec (code=exited, status=0/SUCCESS)

Main PID: 22333 (code=exited, status=1/FAILURE)

Jul 12 22:00:47 elk-node1 elasticsearch[22333]: at sun.nio.fs.UnixException.rethrowAsIOException(UnixException.java:102)

Jul 12 22:00:47 elk-node1 elasticsearch[22333]: at sun.nio.fs.UnixException.rethrowAsIOException(UnixException.java:107)

Jul 12 22:00:47 elk-node1 elasticsearch[22333]: at sun.nio.fs.UnixFileSystemProvider.createDirectory(UnixFileSystemProvider.java:384)

Jul 12 22:00:47 elk-node1 elasticsearch[22333]: at java.nio.file.Files.createDirectory(Files.java:674)

Jul 12 22:00:47 elk-node1 elasticsearch[22333]: at java.nio.file.Files.createAndCheckIsDirectory(Files.java:781)

Jul 12 22:00:47 elk-node1 elasticsearch[22333]: at java.nio.file.Files.createDirectories(Files.java:767)

Jul 12 22:00:47 elk-node1 elasticsearch[22333]: at org.elasticsearch.bootstrap.Security.ensureDirectoryExists(Security.java:337)

Jul 12 22:00:47 elk-node1 systemd[1]: elasticsearch.service: main process exited, code=exited, status=1/FAILURE

Jul 12 22:00:47 elk-node1 systemd[1]: Unit elasticsearch.service entered failed state.

Jul 12 22:00:47 elk-node1 systemd[1]: elasticsearch.service failed.

[root@elk-node1 elasticsearch]# cd /var/log/elasticsearch/

[root@elk-node1 elasticsearch]# ll

total 4

-rw-r--r-- 1 elasticsearch elasticsearch 0 Jul 12 22:00 hejianlai_deprecation.log

-rw-r--r-- 1 elasticsearch elasticsearch 0 Jul 12 22:00 hejianlai_index_indexing_slowlog.log

-rw-r--r-- 1 elasticsearch elasticsearch 0 Jul 12 22:00 hejianlai_index_search_slowlog.log

-rw-r--r-- 1 elasticsearch elasticsearch 2232 Jul 12 22:00 hejianlai.log

[root@elk-node1 elasticsearch]# tail hejianlai.log

at sun.nio.fs.UnixException.translateToIOException(UnixException.java:84)

at sun.nio.fs.UnixException.rethrowAsIOException(UnixException.java:102)

at sun.nio.fs.UnixException.rethrowAsIOException(UnixException.java:107)

at sun.nio.fs.UnixFileSystemProvider.createDirectory(UnixFileSystemProvider.java:384)

at java.nio.file.Files.createDirectory(Files.java:674)

at java.nio.file.Files.createAndCheckIsDirectory(Files.java:781)

at java.nio.file.Files.createDirectories(Files.java:767)

at org.elasticsearch.bootstrap.Security.ensureDirectoryExists(Security.java:337)

at org.elasticsearch.bootstrap.Security.addPath(Security.java:314)

... 7 more

[root@elk-node1 elasticsearch]# less hejianlai.log

You have new mail in /var/spool/mail/root

[root@elk-node1 elasticsearch]# grep elas /etc/passwd

elasticsearch:x:991:988:elasticsearch user:/home/elasticsearch:/sbin/nologin

#报错/data/es-data没权限,赋权限即可

[root@elk-node1 elasticsearch]# chown -R elasticsearch:elasticsearch /data/es-data/

[root@elk-node1 elasticsearch]# systemctl start elasticsearch

[root@elk-node1 elasticsearch]# systemctl status elasticsearch

● elasticsearch.service - Elasticsearch

Loaded: loaded (/usr/lib/systemd/system/elasticsearch.service; disabled; vendor preset: disabled)

Active: active (running) since Thu 2018-07-12 22:03:28 CST; 4s ago

Docs: http://www.elastic.co

Process: 22398 ExecStartPre=/usr/share/elasticsearch/bin/elasticsearch-systemd-pre-exec (code=exited, status=0/SUCCESS)

Main PID: 22400 (java)

CGroup: /system.slice/elasticsearch.service

└─22400 /bin/java -Xms256m -Xmx1g -Djava.awt.headless=true -XX:+UseParNewGC -XX:+UseConcMarkSweepGC -XX:CMSInitiatingOccupancyFraction=75 -XX:+UseCMSInitiatingOccupancyOnly -XX:+HeapDumpOnOutOfMe...

Jul 12 22:03:29 elk-node1 elasticsearch[22400]: [2018-07-12 22:03:29,739][WARN ][bootstrap ] If you are logged in interactively, you will have to re-login for the new limits to take effect.

Jul 12 22:03:29 elk-node1 elasticsearch[22400]: [2018-07-12 22:03:29,899][INFO ][node ] [elk-node1] version[2.4.6], pid[22400], build[5376dca/2017-07-18T12:17:44Z]

Jul 12 22:03:29 elk-node1 elasticsearch[22400]: [2018-07-12 22:03:29,899][INFO ][node ] [elk-node1] initializing ...

Jul 12 22:03:30 elk-node1 elasticsearch[22400]: [2018-07-12 22:03:30,644][INFO ][plugins ] [elk-node1] modules [reindex, lang-expression, lang-groovy], plugins [], sites []

Jul 12 22:03:30 elk-node1 elasticsearch[22400]: [2018-07-12 22:03:30,845][INFO ][env ] [elk-node1] using [1] data paths, mounts [[/ (rootfs)]], net usable_space [1.7gb], n...types [rootfs]

Jul 12 22:03:30 elk-node1 elasticsearch[22400]: [2018-07-12 22:03:30,845][INFO ][env ] [elk-node1] heap size [1007.3mb], compressed ordinary object pointers [true]

Jul 12 22:03:33 elk-node1 elasticsearch[22400]: [2018-07-12 22:03:33,149][INFO ][node ] [elk-node1] initialized

Jul 12 22:03:33 elk-node1 elasticsearch[22400]: [2018-07-12 22:03:33,149][INFO ][node ] [elk-node1] starting ...

Jul 12 22:03:33 elk-node1 elasticsearch[22400]: [2018-07-12 22:03:33,333][INFO ][transport ] [elk-node1] publish_address {192.168.247.135:9300}, bound_addresses {[::]:9300}

Jul 12 22:03:33 elk-node1 elasticsearch[22400]: [2018-07-12 22:03:33,345][INFO ][discovery ] [elk-node1] hejianlai/iUUTEKhyTxyL78aGtrrBOw

Hint: Some lines were ellipsized, use -l to show in full.

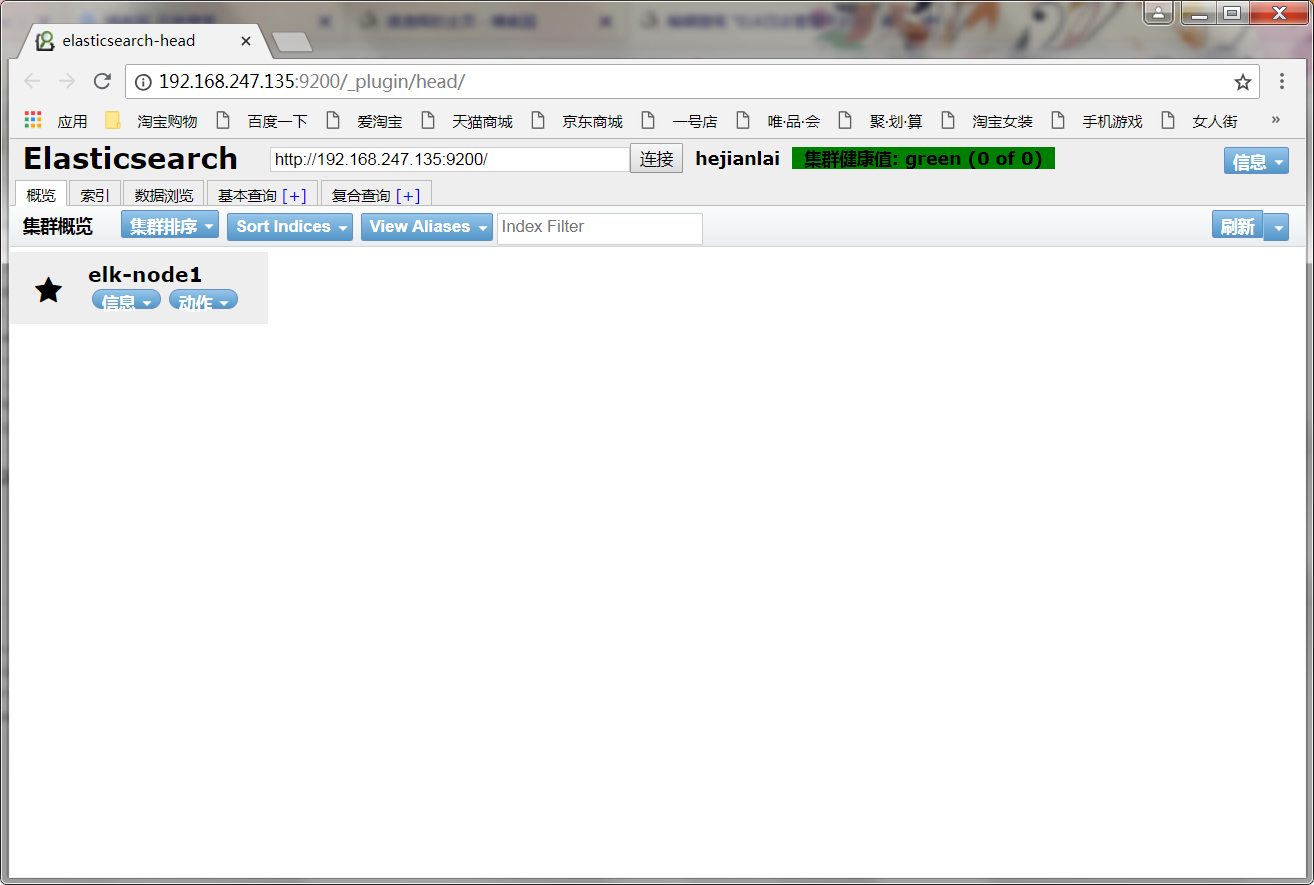

访问地址:http://192.168.247.135:9200/

安装ES插件

#统计索引数

[root@elk-node1 ~]# curl -i -XGET 'http://192.168.247.135:9200/_count?pretty' -d '

> "query": {

> "match_all":{}

> }'

HTTP/1.1 200 OK

Content-Type: application/json; charset=UTF-8

Content-Length: 95

{

"count" : 0,

"_shards" : {

"total" : 0,

"successful" : 0,

"failed" : 0

}

}

#es插件,收费的不建议使用(这个不安装)

[root@elk-node1 bin]# /usr/share/elasticsearch/bin/plugin install marvel-agent

#安装开源的elasticsearch-head插件

[root@elk-node1 bin]# /usr/share/elasticsearch/bin/plugin install mobz/elasticsearch-head

-> Installing mobz/elasticsearch-head...

Trying https://github.com/mobz/elasticsearch-head/archive/master.zip ...

Downloading ...........................................................................................................................................DONE

Verifying https://github.com/mobz/elasticsearch-head/archive/master.zip checksums if available ...

NOTE: Unable to verify checksum for downloaded plugin (unable to find .sha1 or .md5 file to verify)

访问地址:http://192.168.247.135:9200/_plugin/head/

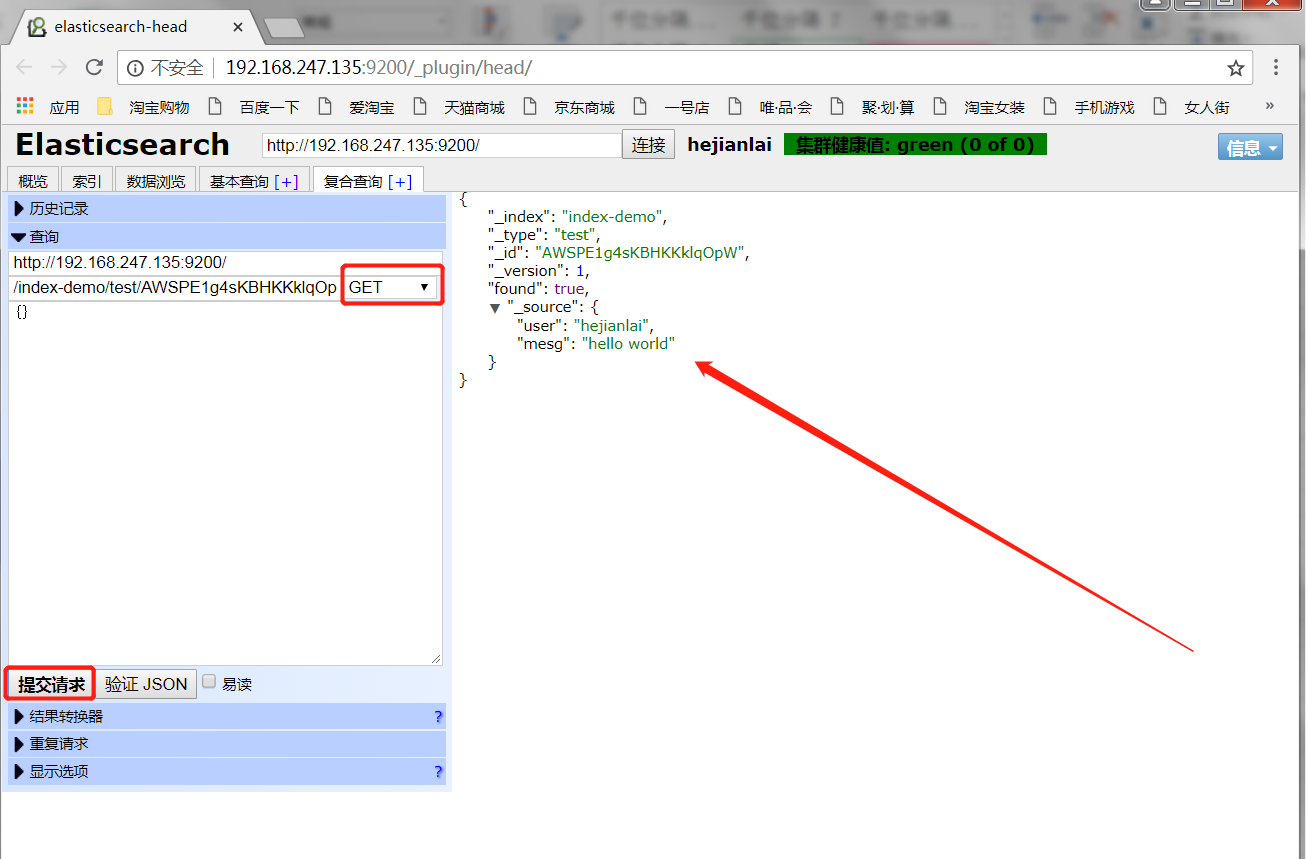

使用POST方法创建查询

使用GET方法查询数据

基本查询

elk-node2配置

[root@elk-node2 elasticsearch]# grep '^[a-z]' /etc/elasticsearch/elasticsearch.yml

cluster.name: hejianlai

node.name: elk-node2

path.data: /data/es-data

path.logs: /var/log/elasticsearch/

bootstrap.memory_lock: true

network.host: 0.0.0.0

http.port: 9200

discovery.zen.ping.multicast.enabled: false

discovery.zen.ping.unicast.hosts: ["192.168.247.135", "192.168.247.133"]

在构建Elasticsearch(ES)多节点集群的时候,通常情况下只需要将elasticsearch.yml中的cluster.name设置成相同即可,ES会自动匹配并构成集群。但是很多时候可能由于不同的节点在不同的网段下,导致无法自动获取集群。此时可以将启用单播,显式指定节点的发现。具体做法是在elasticsearch.yml文件中设置如下两个参数:

discovery.zen.ping.multicast.enabled: false

discovery.zen.ping.unicast.hosts: ["192.168.247.135", "192.168.247.133"]

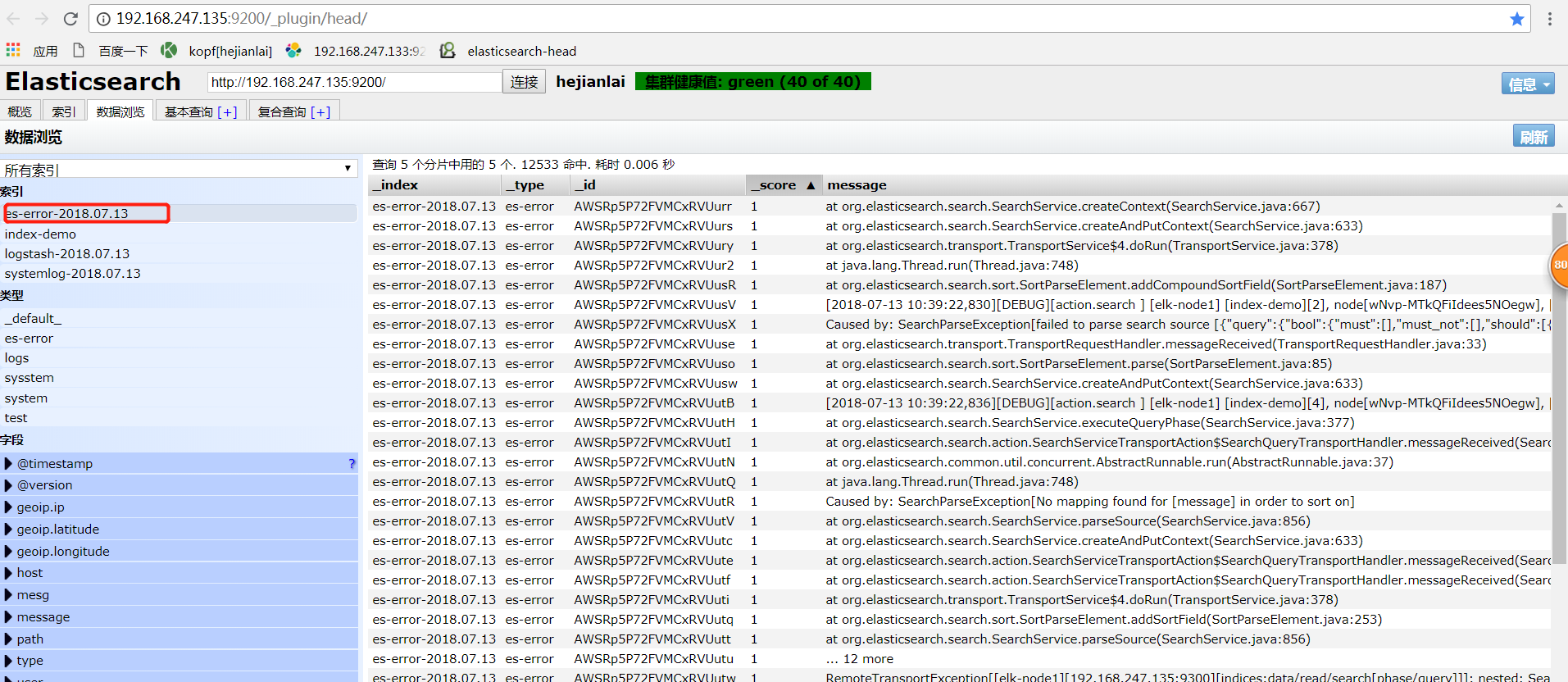

重启elk-node1,并开启elk-node2,访问:192.168.247.135:9200/_plugin/head/

安装监控kopf

[root@elk-node1 ~]# /usr/share/elasticsearch/bin/plugin install lmenezes/elasticsearch-kopf

-> Installing lmenezes/elasticsearch-kopf...

Trying https://github.com/lmenezes/elasticsearch-kopf/archive/master.zip ...

Downloading .........................................................................................................................DONE

Verifying https://github.com/lmenezes/elasticsearch-kopf/archive/master.zip checksums if available ...

NOTE: Unable to verify checksum for downloaded plugin (unable to find .sha1 or .md5 file to verify)

Installed kopf into /usr/share/elasticsearch/plugins/kopf

访问地址:http://192.168.247.135:9200/_plugin/kopf/#!/cluster

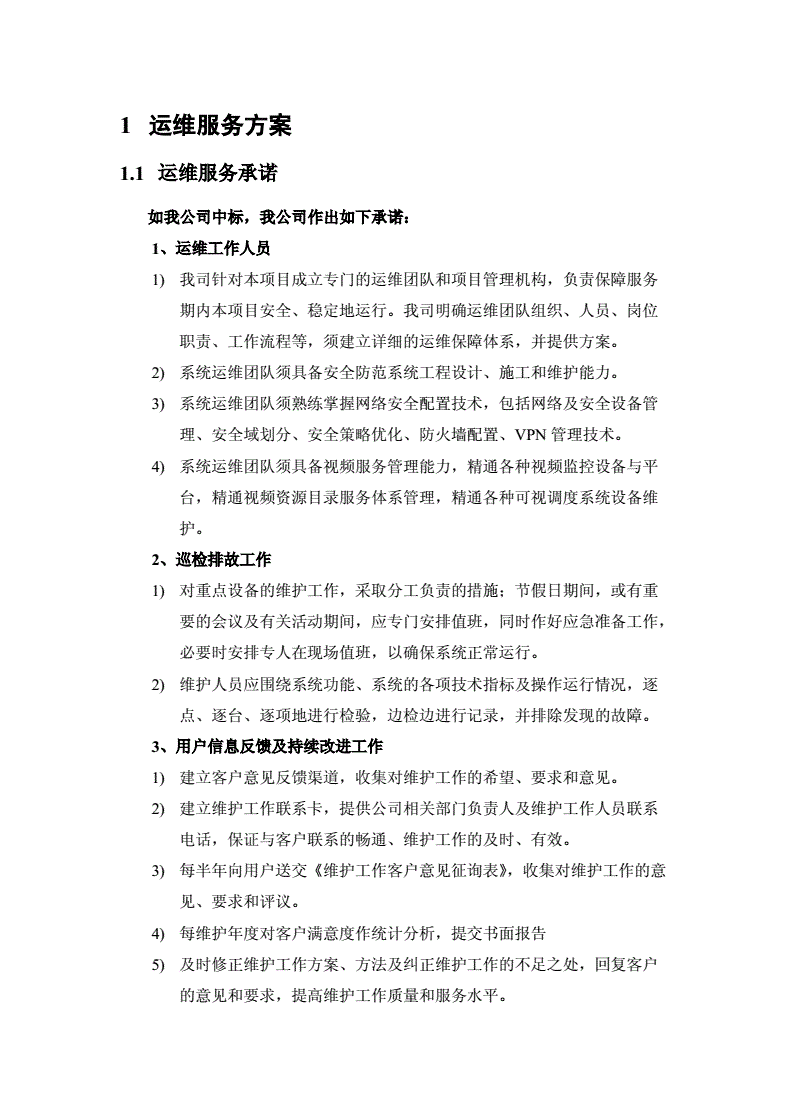

四、安装Logstash

1)下载并安装GPG Key

[root@elk-node1 ~]# rpm --import https://packages.elastic.co/GPG-KEY-elasticsearch

2)添加yum仓库

[root@elk-node1 ~]# vim /etc/yum.repos.d/logstash.repo

[logstash-2.1]

name=Logstash repository for 2.1.x packages

baseurl=http://packages.elastic.co/logstash/2.1/centos

gpgcheck=1

gpgkey=http://packages.elastic.co/GPG-KEY-elasticsearch

enabled=1

3)安装logstash

[root@elk-node1 ~]# yum install -y logstash

4)测试数据

#简单的输入输出

[root@elk-node1 ~]# /opt/logstash/bin/logstash -e 'input { stdin{} } output { stdout{} }'

Settings: Default filter workers: 1

Logstash startup completed

hello world

2018-07-13T00:54:34.497Z elk-node1 hello world

hi hejianlai

2018-07-13T00:54:44.453Z elk-node1 hi hejianlai

来宾张家辉

2018-07-13T00:55:35.278Z elk-node1 来宾张家辉

^CSIGINT received. Shutting down the pipeline. {:level=>:warn}

Logstash shutdown completed

#可以使用rubydebug详细输出

[root@elk-node1 ~]# /opt/logstash/bin/logstash -e 'input { stdin{} } output { stdout{ codec => rubydebug } }'

Settings: Default filter workers: 1

Logstash startup completed

mimi

{

"message" => "mimi",

"@version" => "1",

"@timestamp" => "2018-07-13T00:58:59.980Z",

"host" => "elk-node1"

}

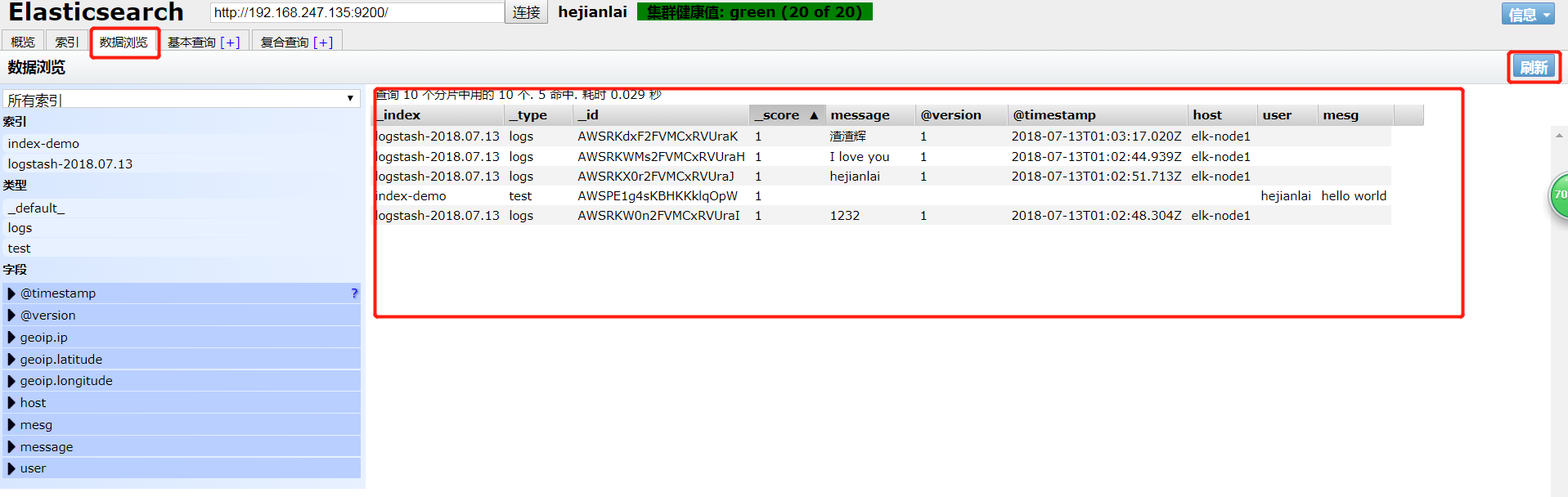

#内容写进elasticsearch中

[root@elk-node1 ~]# /opt/logstash/bin/logstash -e 'input { stdin{} } output { elasticsearch{hosts=>["192.168.247.135"]} }'

Settings: Default filter workers: 1

Logstash startup completed

I love you

1232

hejianlai

渣渣辉

^CSIGINT received. Shutting down the pipeline. {:level=>:warn}

Logstash shutdown completed

[root@elk-node1 ~]# /opt/logstash/bin/logstash -e 'input { stdin{} } output { elasticsearch { hosts => ["192.168.247.135:9200"]} stdout{ codec => rubydebug}}'

Settings: Default filter workers: 1

Logstash startup completed

广州

{

"message" => "广州",

"@version" => "1",

"@timestamp" => "2018-07-13T02:17:40.800Z",

"host" => "elk-node1"

}

hehehehehehehe

{

"message" => "hehehehehehehe",

"@version" => "1",

"@timestamp" => "2018-07-13T02:17:49.400Z",

"host" => "elk-node1"

}

logstash日志收集配置文件编写

#交换式输入信息

[root@elk-node1 ~]# cat /etc/logstash/conf.d/logstash-01.conf

input { stdin { } }

output {

elasticsearch { hosts => ["192.168.247.135:9200"]}

stdout { codec => rubydebug }

}

执行命令

[root@elk-node1 ~]# /opt/logstash/bin/logstash -f /etc/logstash/conf.d/logstash-01.conf

Settings: Default filter workers: 1

Logstash startup completed

l o v e

{

"message" => "l o v e",

"@version" => "1",

"@timestamp" => "2018-07-13T02:37:42.670Z",

"host" => "elk-node1"

}

地久梁朝伟

{

"message" => "地久梁朝伟",

"@version" => "1",

"@timestamp" => "2018-07-13T02:38:20.049Z",

"host" => "elk-node1"

}

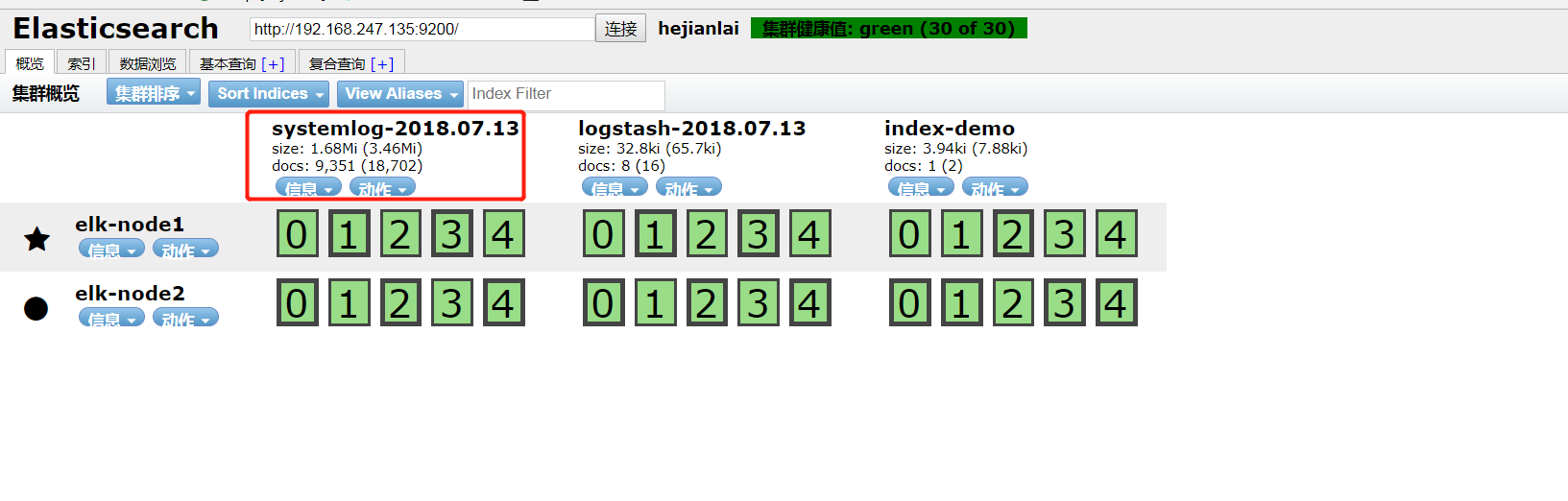

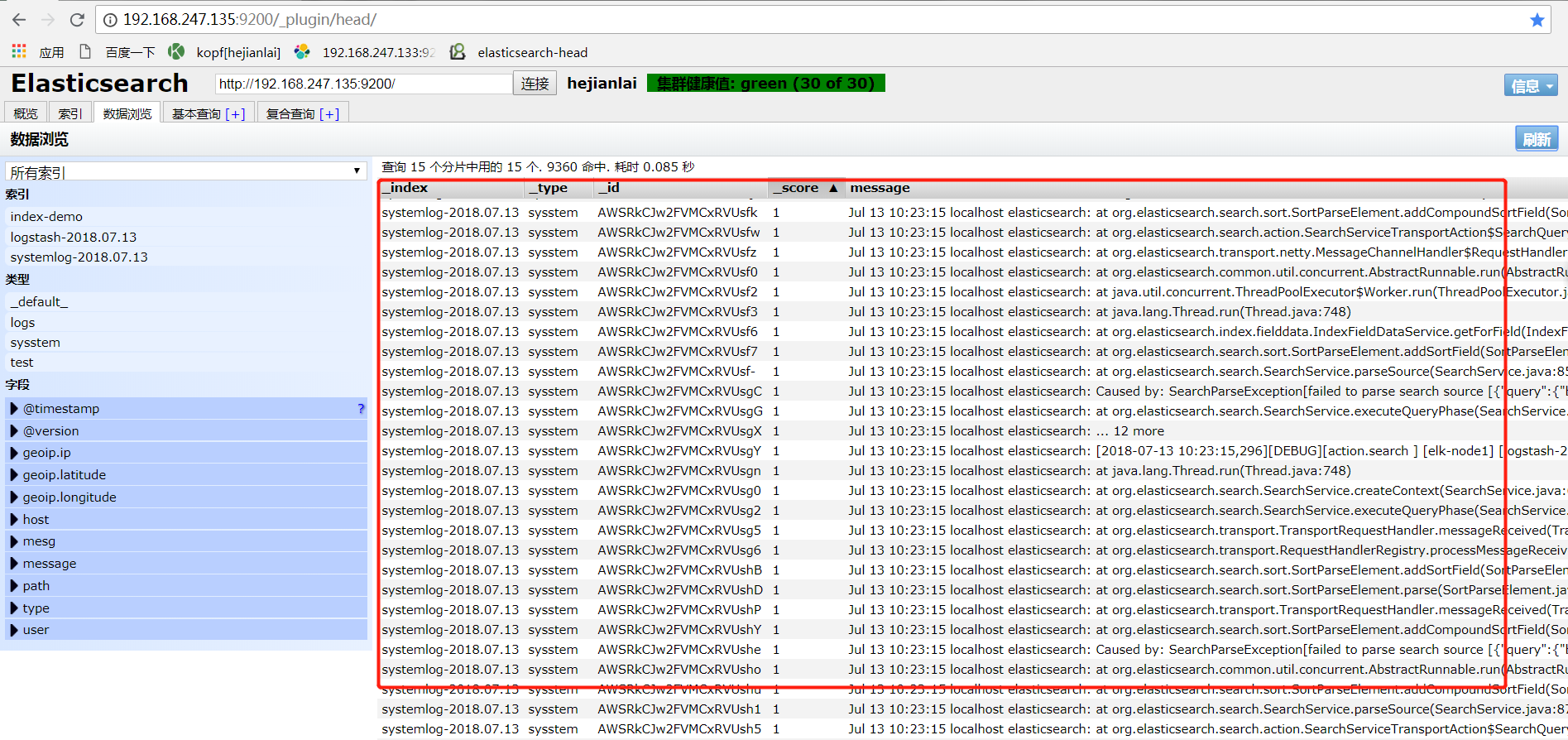

#收集系统日志

[root@elk-node1 conf.d]# cat /etc/logstash/conf.d/systemlog.conf

input{

file {

path => "/var/log/messages"

type => "sysstem"

start_position => "beginning"

}

}

output{

elasticsearch{

hosts => ["192.168.247.135:9200"]

index => "systemlog-%{+YYYY.MM.dd}"

}

}

#放在后台执行

[root@elk-node1 conf.d]# /opt/logstash/bin/logstash -f /etc/logstash/conf.d/systemlog.conf &

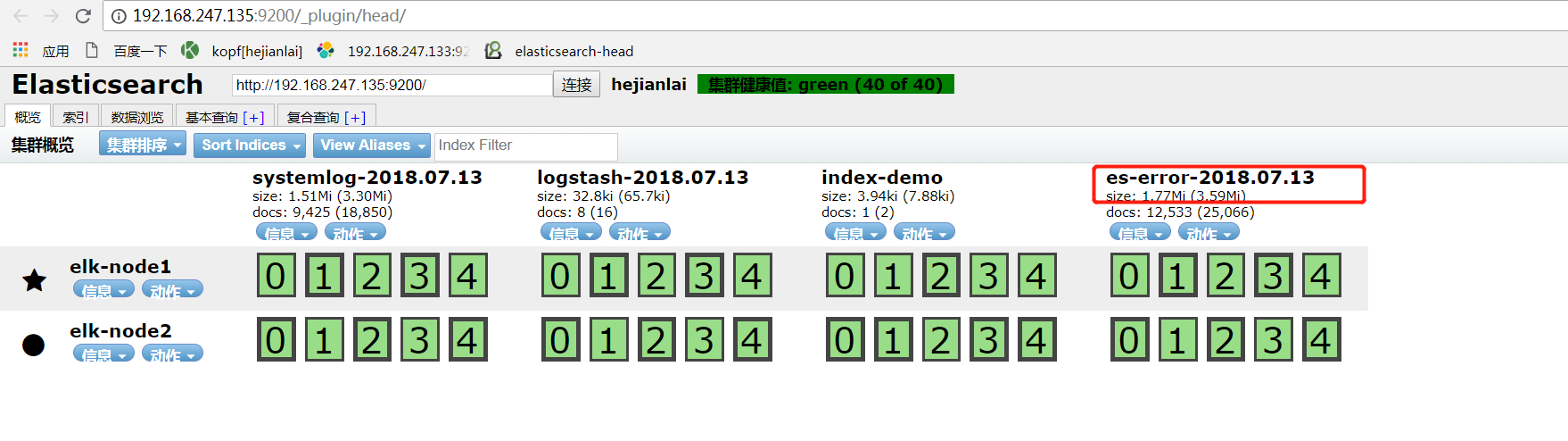

收集elk错误日志配置文件编写

[root@elk-node1 ~]# cat /etc/logstash/conf.d/elk_log.conf

input {

file {

path => "/var/log/messages"

type => "system"

start_position => "beginning"

}

}

input {

file {

path => "/var/log/elasticsearch/hejianlai.log"

type => "es-error"

start_position => "beginning"

codec => multiline {

pattern => "^\[" //正则匹配[开头的为一个事件

negate => true

what => "previous"

}

}

}

output {

if [type] == "system"{

elasticsearch {

hosts => ["192.168.247.135:9200"]

index => "systemlog-%{+YYYY.MM.dd}"

}

}

if [type] == "es-error"{

elasticsearch {

hosts => ["192.168.247.135:9200"]

index => "es-error-%{+YYYY.MM.dd}"

}

}

}

#放入后台运行

[root@elk-node1 ~]# /opt/logstash/bin/logstash -f /etc/logstash/conf.d/elk_log.conf &

[1] 28574

You have new mail in /var/spool/mail/root

[root@elk-node1 ~]# Settings: Default filter workers: 1

Logstash startup completed

五、安装Kibana

官方下载地址:https://www.elastic.co/downloads/kibana

官方最新的版本出来了6.3.1太新了,下载后出现很多坑后来就下了4.3.1的·先用着吧~~~

1)kibana的安装:

[root@elk-node1 local]# cd /usr/local/

[root@elk-node1 local]# wget https://artifacts.elastic.co/downloads/kibana/kibana-4.3.1-linux-x64.tar.gz

[root@elk-node1 local]# tar -xf kibana-4.3.1-linux-x64.tar.gz

[root@elk-node1 local]# ln -s /usr/local/kibana-4.3.1-linux-x64 /usr/local/kibana

[root@elk-node1 local]# cd kibana

[root@elk-node1 kibana]# ls

bin config installedPlugins LICENSE.txt node node_modules optimize package.json README.txt src webpackShims

2)修改配置文件:

[root@elk-node1 kibana]# cd config/

[root@elk-node1 config]# pwd

/usr/local/kibana/config

[root@elk-node1 config]# vim kibana.yml

You have new mail in /var/spool/mail/root

[root@elk-node1 config]# grep -Ev "^#|^$" kibana.yml

server.port: 5601

server.host: "0.0.0.0"

elasticsearch.url: "http://192.168.247.135:9200"

kibana.index: ".kibana"

3)screen是一个全屏窗口管理器,它在几个进程(通常是交互式shell)之间复用物理终端。每个虚拟终端提供DEC VT100的功能。

[root@elk-node1 local]# yum install -y screen

4)启动screen命令后运行kibana最后按ctrl+a+d组合键让其在单独的窗口里运行。

[root@elk-node1 config]# screen

[root@elk-node1 config]# /usr/local/kibana/bin/kibana

log [02:23:34.148] [info][status][plugin:kibana@6.3.1] Status changed from uninitialized to green - Ready

log [02:23:34.213] [info][status][plugin:elasticsearch@6.3.1] Status changed from uninitialized to yellow - Waiting for Elasticsearch

log [02:23:34.216] [info][status][plugin:xpack_main@6.3.1] Status changed from uninitialized to yellow - Waiting for Elasticsearch

log [02:23:34.221] [info][status][plugin:searchprofiler@6.3.1] Status changed from uninitialized to yellow - Waiting for Elasticsearch

log [02:23:34.224] [info][status][plugin:ml@6.3.1] Status changed from uninitialized to yellow - Waiting for Elasticsearch

[root@elk-node1 config]# screen -ls

There are screens on:

29696.pts-0.elk-node1 (Detached)

[root@elk-node1 kibana]# /usr/local/kibana/bin/kibana

log [11:25:37.557] [info][status][plugin:kibana] Status changed from uninitialized to green - Ready

log [11:25:37.585] [info][status][plugin:elasticsearch] Status changed from uninitialized to yellow - Waiting for Elasticsearch

log [11:25:37.600] [info][status][plugin:kbn_vislib_vis_types] Status changed from uninitialized to green - Ready

log [11:25:37.602] [info][status][plugin:markdown_vis] Status changed from uninitialized to green - Ready

log [11:25:37.604] [info][status][plugin:metric_vis] Status changed from uninitialized to green - Ready

log [11:25:37.606] [info][status][plugin:spyModes] Status changed from uninitialized to green - Ready

log [11:25:37.608] [info][status][plugin:statusPage] Status changed from uninitialized to green - Ready

log [11:25:37.612] [info][status][plugin:table_vis] Status changed from uninitialized to green - Ready

log [11:25:37.647] [info][listening] Server running at http://0.0.0.0:5601

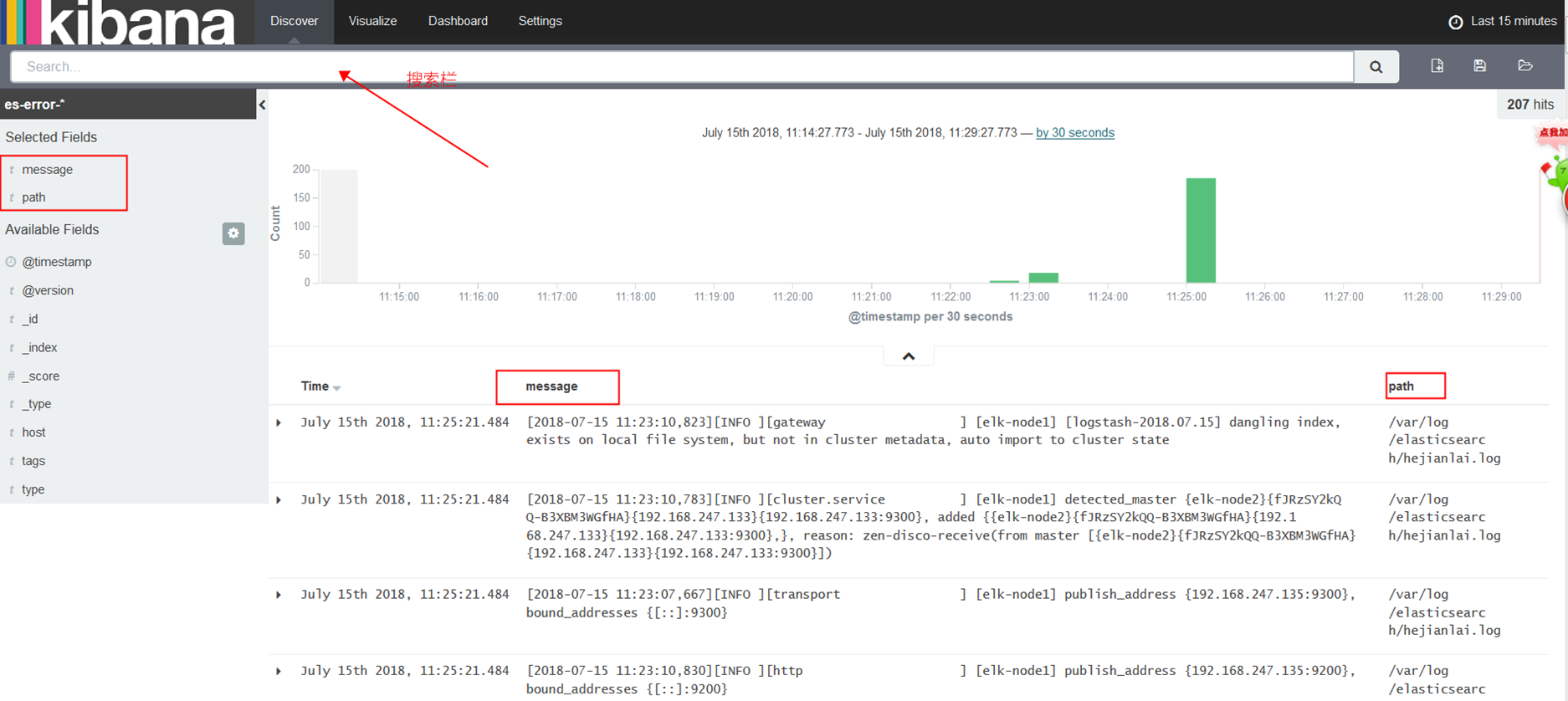

六、kibana简单使用

访问kibana地址:http://192.168.247.135:5601

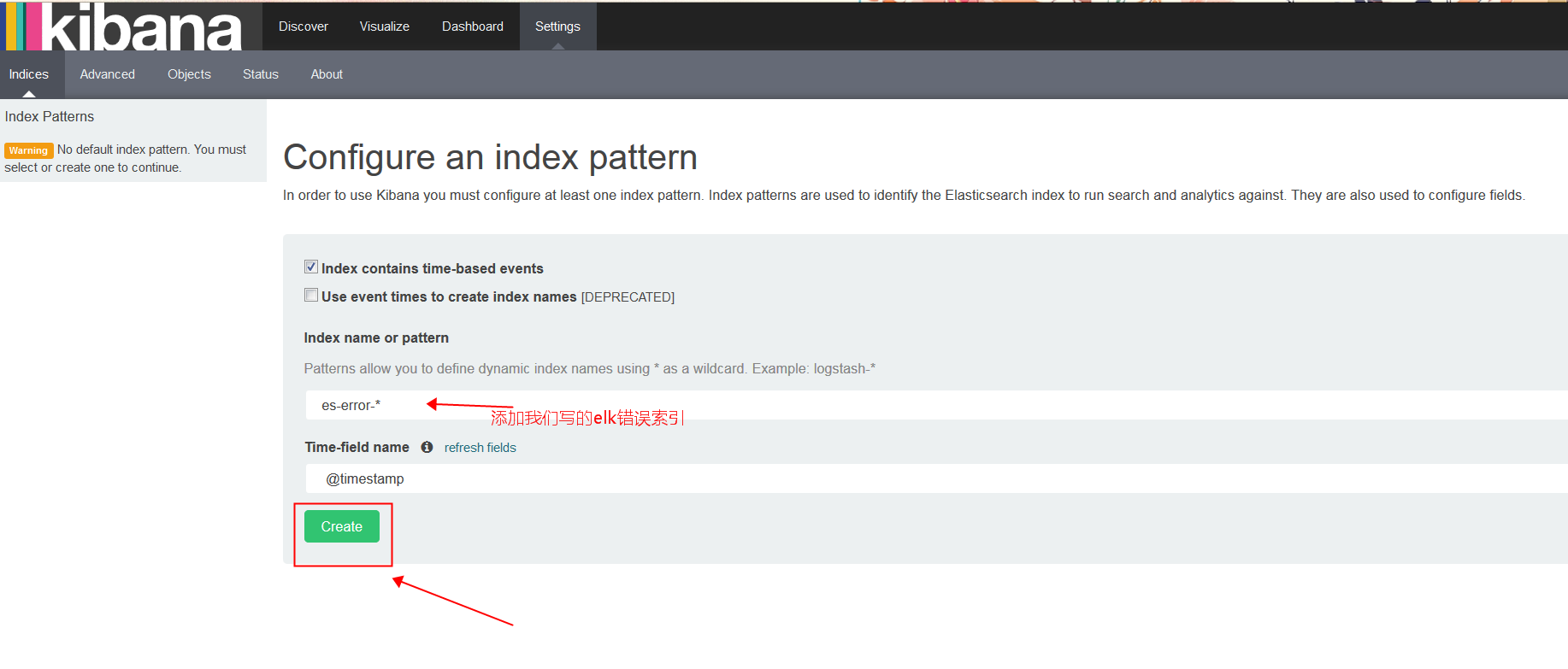

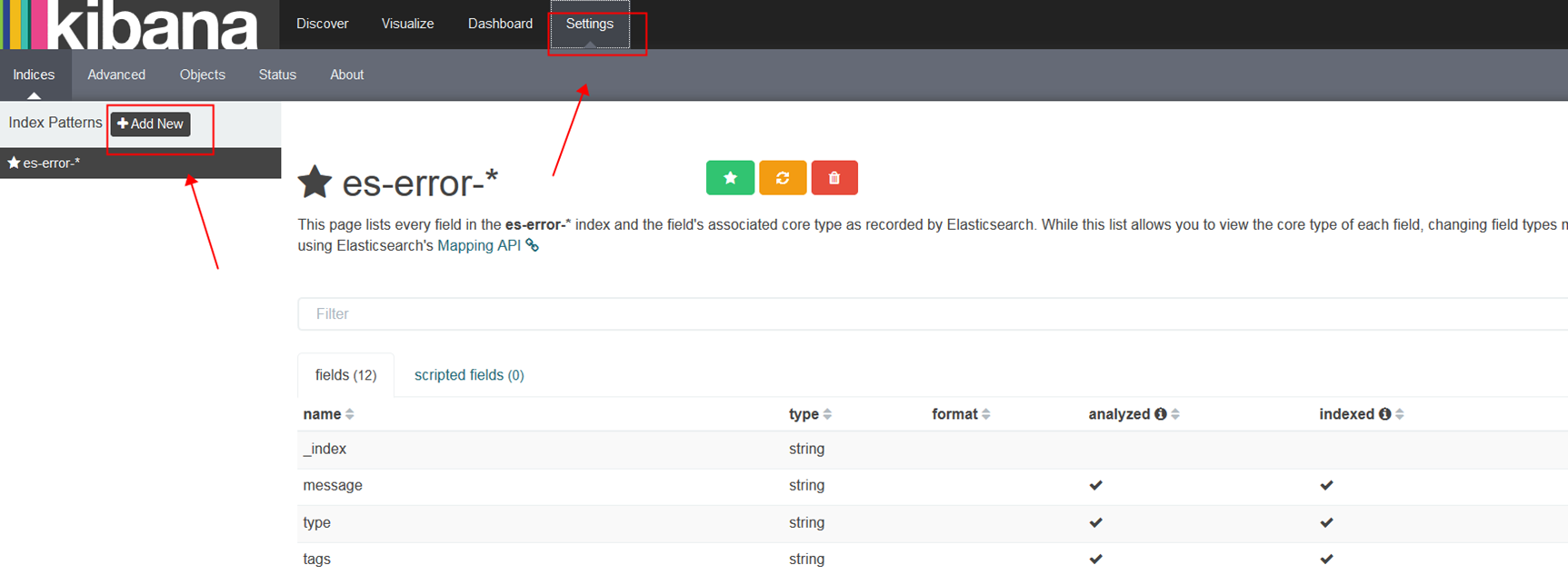

第一次登录我们创建一个elk的es-error索引

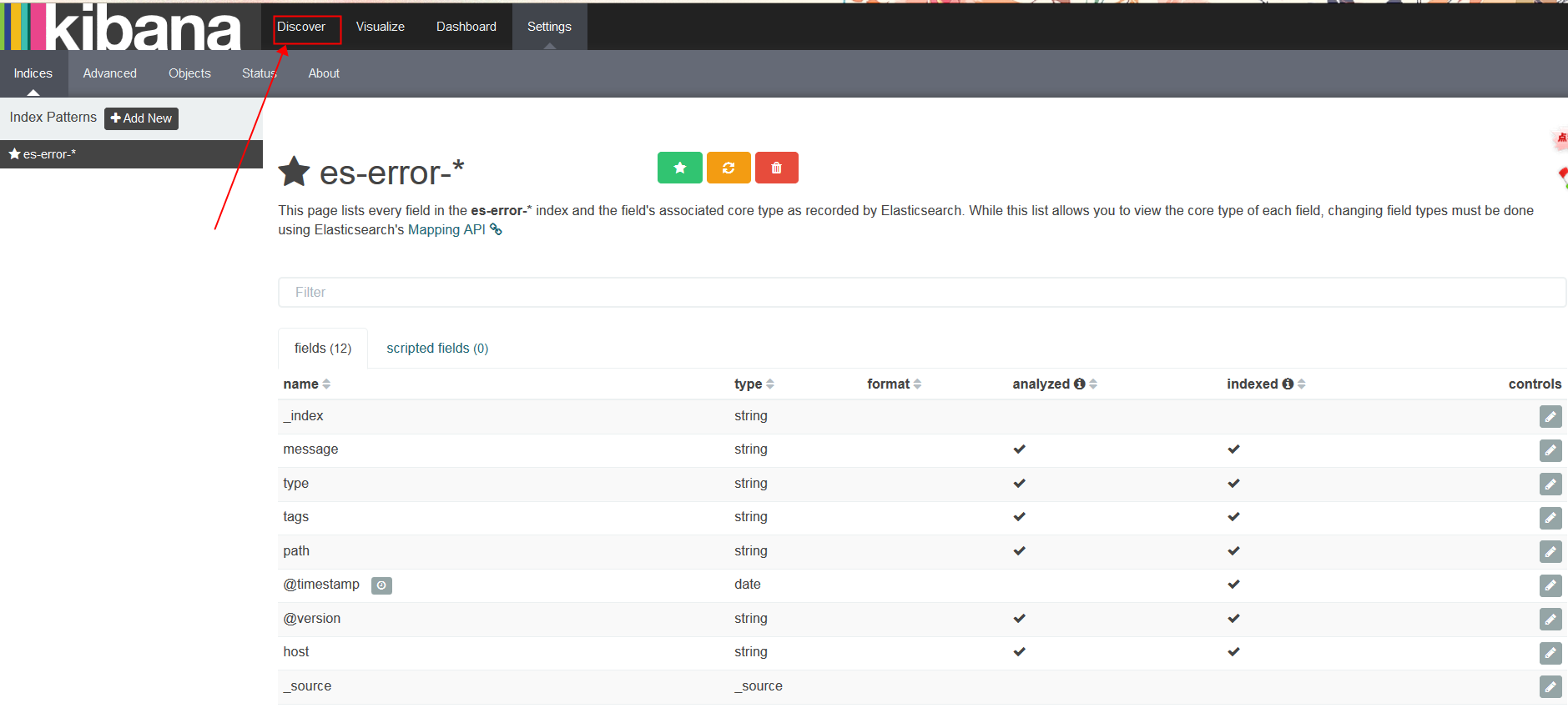

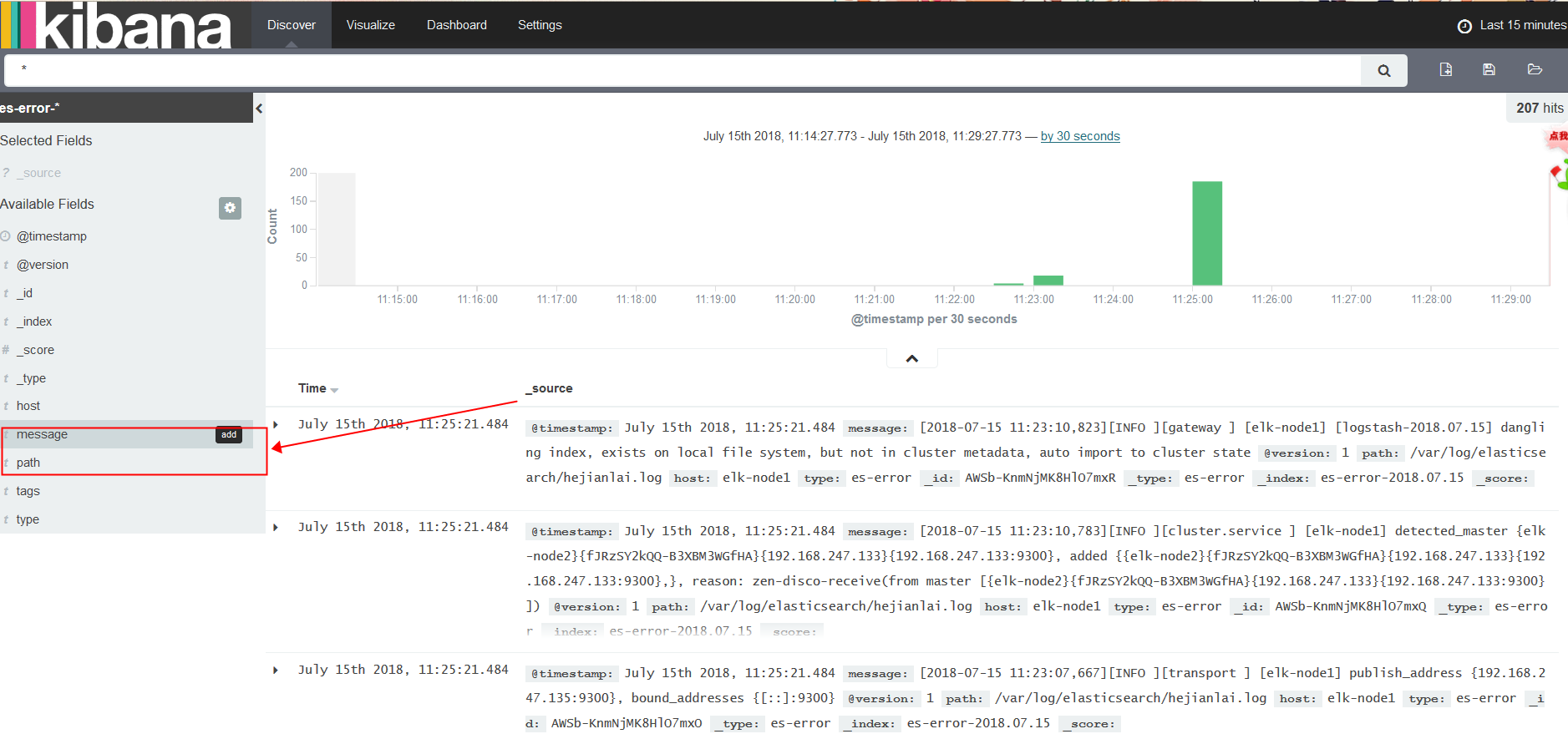

添加message和path字段

运用搜索栏功能,我们搜soft关键字

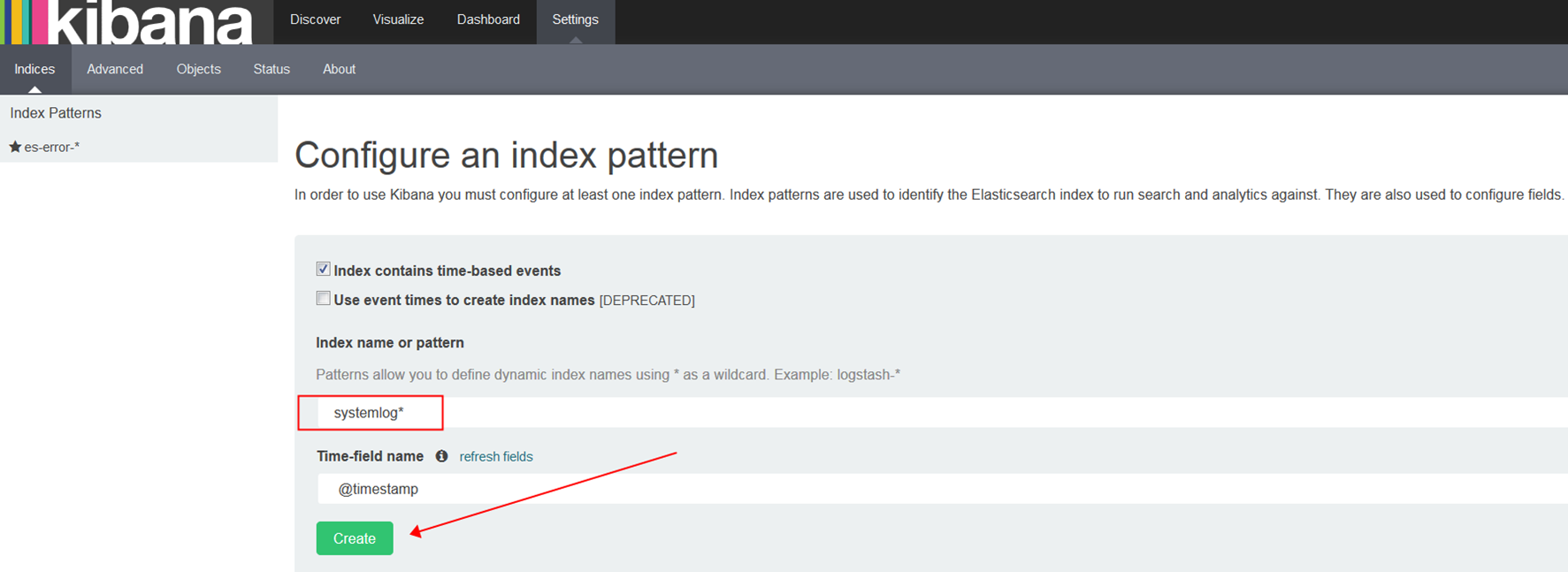

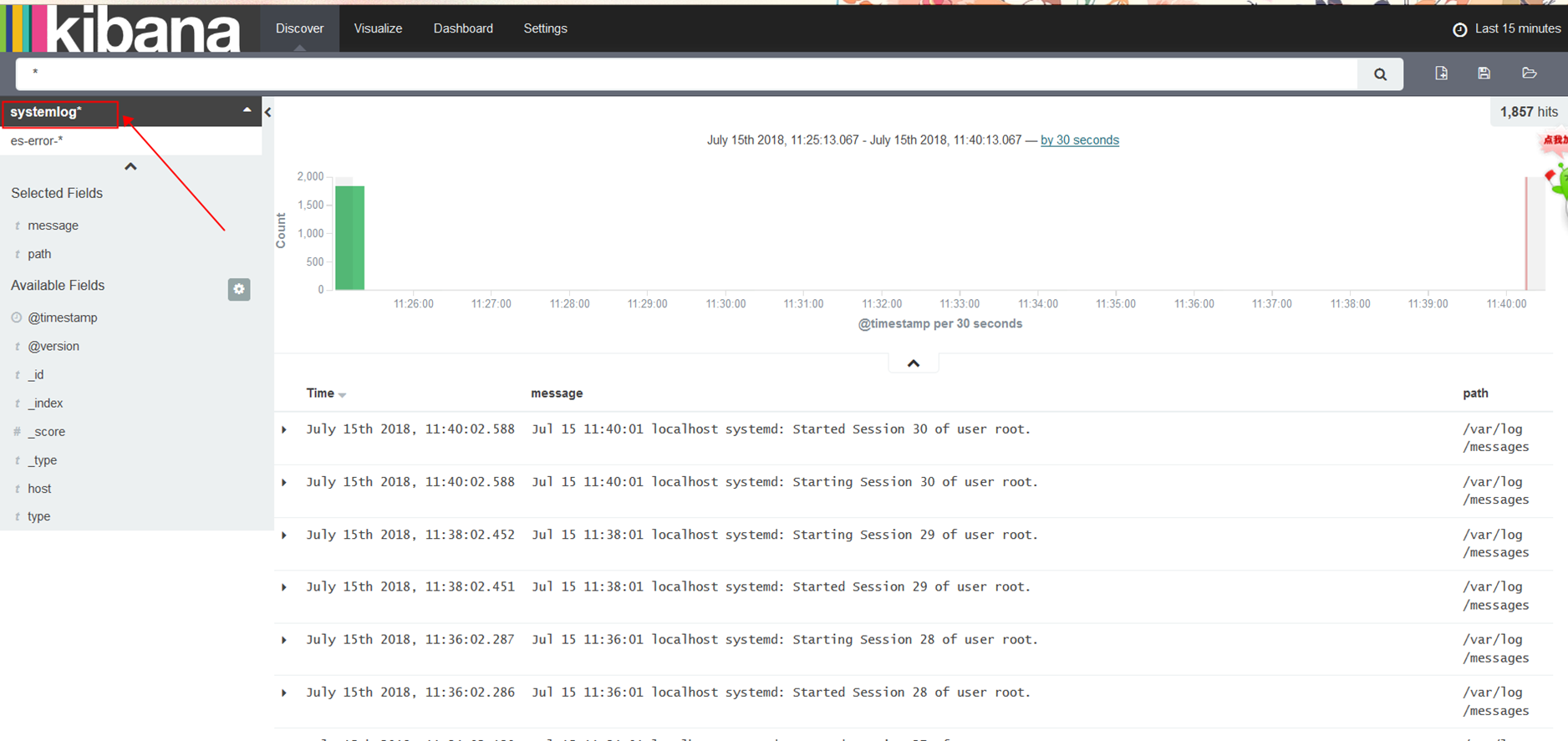

我们在添加之前写的systemlog索引

*为正则匹配

好啦~~到此为止ELK日志平台搭建基本搞掂,,,,累得一笔,,后续可以根据需求编写好需要监控的应用文件添加到kibana上即可。

上篇:

RabbitMQ学习总结

下篇:

Vim常用命令及配置方案